TLDR

- Finance and healthcare organizations are moving away from large cloud-hosted AI models toward private, local-first AI agent frameworks for better control and compliance.

- Cloud-based monolithic AI models create risks around data privacy, residency, auditability, vendor lock-in, and regulatory compliance.

- Regulated industries require AI systems that prioritize security, explainability, and controllable infrastructure over raw model capability.

- Local-first AI frameworks run models and orchestration layers within private infrastructure, ensuring sensitive data never leaves the organization’s environment.

- Agent frameworks add orchestration, memory, reasoning, and tool usage capabilities, enabling specialized AI agents to collaborate securely.

- Finance companies are using private AI agents for fraud detection, transaction analysis, regulatory document review, and compliance workflows.

- Healthcare organizations are deploying local-first AI for clinical decision support, patient data processing, and prior authorization automation while protecting PHI.

- Modern private AI stacks rely on open-weight models like Llama 3.1 and Mistral, combined with orchestration, RAG pipelines, and observability layers.

- While local-first AI increases operational complexity and infrastructure costs, it offers stronger data sovereignty and compliance advantages.

- Regulations like the EU AI Act, HIPAA, GDPR, and NIST frameworks are accelerating the adoption of private, accountable AI architectures.

A risk officer at a major global bank recently asked a seemingly simple question during a board meeting: "Where exactly does our data go when a junior analyst queries GPT-4 for a market summary?"

The room went silent. The answer is that the data leaves the bank's secure perimeter, travels across the public internet, and resides on a third-party server. For industries governed by strict privacy laws and fiduciary duties, this reality is more than a technical detail. It is a fundamental liability.

This question is driving one of the most consequential shifts in enterprise AI adoption today. While the initial wave of generative AI was defined by a rush toward massive, cloud-hosted monolithic models, the tide is turning. Finance and healthcare institutions are moving away from these black-box systems in favor of private, local-first agent frameworks.

The move is driven by a need for private AI that respects data sovereignty and meets the rigid compliance standards of regulated industries. These organizations are demanding a fundamentally different architecture that prioritizes control without sacrificing the intelligence required to stay competitive.

The Monolithic AI Problem for Regulated Industries

To understand why a shift is happening, we must define the monolithic model approach. In the context of AI, monolithic refers to centralized systems where inference, data processing, and model control are handled by a single external vendor.

Centralized Inference and Black-Box Control

When a firm uses a cloud-based AI, they are sending their most sensitive proprietary information to a black box. The vendor controls the model weights, the training data, and the hardware. For a hospital or a hedge fund, this creates a significant visibility gap. They cannot audit the model’s internal decision-making process or guarantee that the vendor will not change the model’s behavior overnight.

The Data Pipeline and Residency Problem

Every API call to a cloud provider is a potential data egress event. In healthcare, patient data (PHI) is protected by HIPAA. In finance, client data is guarded by the SEC and FINRA. Sending this data to a cloud server often violates data residency requirements, especially in regions like the European Union, where the GDPR and the upcoming EU AI Act mandate that certain data remain within specific borders.

Vendor Lock-in and Model Deprecation

OpenAI and Google frequently update their models. While an update from GPT-4 to GPT-4o might seem like an improvement, it can disrupt enterprise workflows that rely on consistent, predictable outputs. Regulated industries require auditable model versioning. If a regulator asks why a specific loan was denied six months ago, the bank must be able to recreate that exact inference environment. Cloud providers rarely offer this level of granular, long-term version control.

What is the difference between a monolithic AI model and an agent framework?

A monolithic model is a single, large program that tries to do everything in one go. An agent framework is a modular system where a smaller brain (the LLM) coordinates several specialized tools and workers to complete complex tasks step by step.

What Regulated Industries Actually Need From AI

Financial and healthcare organizations do not need the smartest model in the world for every task. They need the most compliant and controllable model.

The Finance Regulatory Landscape

In the United States, the SEC and FINRA have made it clear that AI usage does not absolve a firm of its fiduciary duties. SEC Regulation S-P, which was updated for 2026, requires smaller investment advisers to perform rigorous due diligence on every AI vendor that touches client data. Under these rules:

- Firms must have written policies for AI accuracy and bias.

- Every data interaction must be logged and retrievable for audits.

- "AI washing" or misleading clients about AI capabilities can lead to heavy enforcement actions.

The Healthcare Regulatory Landscape

Healthcare providers operate under the minimum necessary standard. This means they should only use the specific data points required for a task. Massive cloud models often ingest more context than is necessary, creating a larger attack surface. Organizations must comply with HIPAA in the US and the NHS Information Governance (IG) Toolkit in the UK.

The Shared Imperative: Auditability and Explainability

Both sectors share a common requirement: they must be able to explain what the model did and why. If a clinical decision support tool suggests a specific treatment, the doctor needs to see the reasoning and the specific data sources used. Local-first frameworks allow for chain-of-thought logging where every step of the AI's logic is saved in a private database that never touches the public web.

Enter Local-First Agent Frameworks

A local-first agent framework is a system where the AI intelligence (the LLM) and the orchestration layer (the "agent") run entirely on an organization’s own hardware or within their private, virtual cloud.

Defining Local-First AI

In this model, the inference happens behind the company firewall. No data is sent to OpenAI, Anthropic, or Google. By using open-weight models like Llama 3.1, Mistral, or Qwen, companies can host the brain of the AI themselves.

What an Agent Framework Adds

A model by itself is just a calculator for words. An agent framework adds the following capabilities:

- Orchestration: Breaking a complex task into smaller sub-tasks.

- Tool Use: Allowing the AI to check a secure internal database or run a local script.

- Memory: Giving the AI a long-term memory of previous interactions without storing that data in the cloud.

- Reasoning: Multi-step logic that ensures the output is grounded in facts.

The Architectural Shift: From Monolith to Composable Agents

Instead of one giant model trying to be a doctor, a lawyer, and an accountant, firms are building swarms of small, specialized agents. One agent might be an expert at reading ISDA schedules (financial contracts), while another is an expert at checking those schedules against current SEC regulations. These agents talk to each other over a local network, ensuring that sensitive data never takes a trip to a third-party server.

Real-World Use Cases Driving Adoption

The move to private AI is not just a theoretical preference. It is happening because specific high-value tasks cannot be done safely in the cloud.

Finance: Fraud Detection and Alert Triage

Banks process millions of transactions per second. When a potential fraud alert is triggered, an analyst must review the history of that customer. Sending a full transaction history to a cloud AI for reasoning is a massive privacy risk. Instead, banks use local agents to:

- Query the private ledger for the last 50 transactions.

- Analyze patterns locally to see if this matches known structuring behaviors.

- Summarize the findings for a human auditor.

Finance: Regulatory Document Analysis

Analyzing 10-Ks, ISDA schedules, and private equity offering memoranda involves handling non-public information. Local-first agents can summarize these documents while keeping the knowledge of those documents inside a private vector database. This prevents the information from being leaked or used to train a competitor's model.

Healthcare: Clinical Decision Support

At the point of care, a doctor might use an AI to help with a differential diagnosis. This involves inputting symptoms, lab results, and medical history. By using a local framework, the hospital ensures this Protected Health Information (PHI) stays within the hospital’s electronic health record (EHR) system. The AI provides suggestions based on the latest medical journals without that patient's specific history ever leaving the room.

Healthcare: Prior Authorization Automation

One of the biggest administrative burdens in healthcare is "prior auth"—getting an insurance company to approve a procedure. This requires extracting data from payer guidelines and matching it against clinical notes. Private agents can automate this extraction process safely, reducing the time patients wait for surgery from weeks to hours.

The Technical Architecture of a Private Agent Stack

Building a private agent stack is more complex than calling an API, but it provides a moat of security and proprietary value.

1. The Model Layer

As of 2026, several open-source and open-weights models have reached frontier levels of performance.

- Llama 3.1 405B: Meta’s massive model that rivals GPT-4o in reasoning and logic.

- Mistral Large: A highly efficient European model favored for its adherence to EU regulations.

- Phi-4 and Falcon: Smaller, narrow"models that can run on a single workstation for specific tasks like coding or summarization.

2. The Orchestration Layer

This is the manager that directs the models. It handles the routing of questions. For example, if a question is about a medical code, the orchestrator sends it to a model fine-tuned on ICD-10 codes. If it is a general question, it goes to a larger model.

3. The Retrieval and Memory Layer (RAG)

Retrieval-Augmented Generation (RAG) is the process of giving the AI open-book access to your company’s files. In a local-first setup, the index of these files is stored in a private vector database hosted on-premises.

4. The Observability and Audit Layer

Every single thought the agent has must be logged. This layer tracks:

- Which user asked the question?

- What data was retrieved from the internal database?

- What was the exact model version used?

- Did a human review the final output?

This level of detail is exactly what regulators like the SEC or the UK’s NHS AI Lab look for during an inspection.

The Trade-offs Are Real: Capability vs. Security

It would be dishonest to suggest that local-first AI is better in every single way. Organizations must weigh the benefits against some very real challenges.

The Capability Gap

Frontier models like GPT-4o or Gemini Ultra still hold a slight edge in extreme reasoning or multi-modal tasks (like looking at an image and a spreadsheet simultaneously). While models like Llama 3.1 have significantly narrowed this gap, the very latest cloud models usually have a six-month lead on open-source alternatives.

The Operational Burden

When you use a cloud API, the vendor handles the hardware, the cooling, the security, and the uptime. When you go local-first, you own the infrastructure. This means you need:

- High-end GPUs.

- A team of DevOps engineers to manage the "LLMOps" pipeline.

- Rigorous internal security protocols to prevent your own employees from mishandling the local model.

The Convergence Argument

The gap between closed and open models is closing faster than most experts predicted in 2024. For 95% of enterprise tasks—summarization, data extraction, and logical routing—open models are now indistinguishable from their cloud counterparts. In fact, many firms find that a smaller local model fine-tuned on their specific financial or medical data actually outperforms a general giant model like GPT-4.

Regulatory Tailwinds Accelerating the Shift

The legal landscape is the final push moving companies toward local-first AI.

The EU AI Act

Entering its full implementation phase in 2026, the EU AI Act classifies most AI used in healthcare and essential financial services as high-risk. High-risk systems have a legal obligation to provide transparency and provision of information to users. It is much easier to prove transparency when you own the model and the data logs than when you are renting them from a third party in a different country.

US Executive Order on AI and NIST Frameworks

The US government has released the NIST AI Risk Management Framework. While not law in the same way as the EU Act, it has become the gold standard for insurance companies. If a bank wants to be "insurable" against AI-related errors, it must follow NIST guidelines, which emphasize data governance and robustness—both of which are easier to achieve in a local-first environment.

NHS AI Lab and "Data Foundations"

The NHS has shifted its strategy toward shared data layers. They are moving away from isolated pilots and toward a unified architecture where AI resolves issues (like updating records) rather than just answering questions. This agentic approach requires the AI to be deeply integrated into the hospital's private network.

How to Evaluate a Local-First Agent Framework

If your organization is considering moving away from The Big Three cloud providers and toward a private stack, use this practical checklist to evaluate your options:

| Requirement |

Why It Matters |

| Data Perimeter |

Can you guarantee that zero bytes of data leave your VPC or on-prem servers? |

| Model Weight Ownership |

Do you have the right to run the model indefinitely, even if the vendor goes out of business? |

| Full Audit Trail |

Does the framework log the prompt, the retrieved context, and the final output for every query? |

| Identity Integration |

Does the AI respect your existing "user permissions"? (e.g., An intern shouldn't be able to ask the AI for the CEO's salary). |

| Latency and Cost |

Is the cost per inference lower than a cloud API when you factor in your own hardware costs? |

| Fine-tuning Pipeline |

Can you "teach" the model your company's specific jargon without sharing that jargon with a third party? |

From Capability to Accountability

The question for 2026 is no longer "Which AI is the smartest?" The real question is "Which AI can we trust with our most sensitive assets?"

For finance and healthcare, the answer is increasingly clear. The monolithic, cloud-only model is a relic of the "experimental" phase of AI. As these technologies move into core operations—processing trades, diagnosing patients, and managing billions of dollars in assets—the need for accountability outweighs the convenience of a simple API key.

The move to local-first is not a step backward in capability. It is a step forward in maturity. By adopting private agent frameworks, regulated industries are building a future where AI is powerful, private, and, most importantly, under their control.

If you are ready to explore how a private, agentic architecture can secure your firm’s future, visit NeuraHQ to learn more about our local-first deployment solutions.

Desperate times call for desperate Google/Chat GPT searches, right? "Best Shopify apps for sales." "How to increase online sales fast." "AI tools for ecommerce growth."

Been there. Done that. Installed way too many apps.

But here's what nobody tells you while you're doom-scrolling through Shopify app reviews at 2 AM—that magical online sales-boosting app you're searching for? It doesn't exist. Because if it did, Jeff Bezos would've bought (or built!) it yesterday, and we (fellow eCommerce store owners) would all be retired in Bali by now.

Growing a Shopify store and increasing online sales isn’t easy—we get it. While everyone’s out chasing the next “revolutionary” tool/trend (looking at you, DeepSeek), the real revenue drivers are probably hiding in plain sight—right there inside your customer data.

After working with Shopify stores like yours (shoutout to Cybele, who recovered almost 25% of their abandoned carts with WhatsApp automation), we’ve cracked the code on what actually moves the needle.

Ready to stop app-hopping and start actually growing your sales by using what you already have? Here are four fixes that will get you there!

Fix #1: Convert abandoned carts instantly (Like, actually instantly)

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

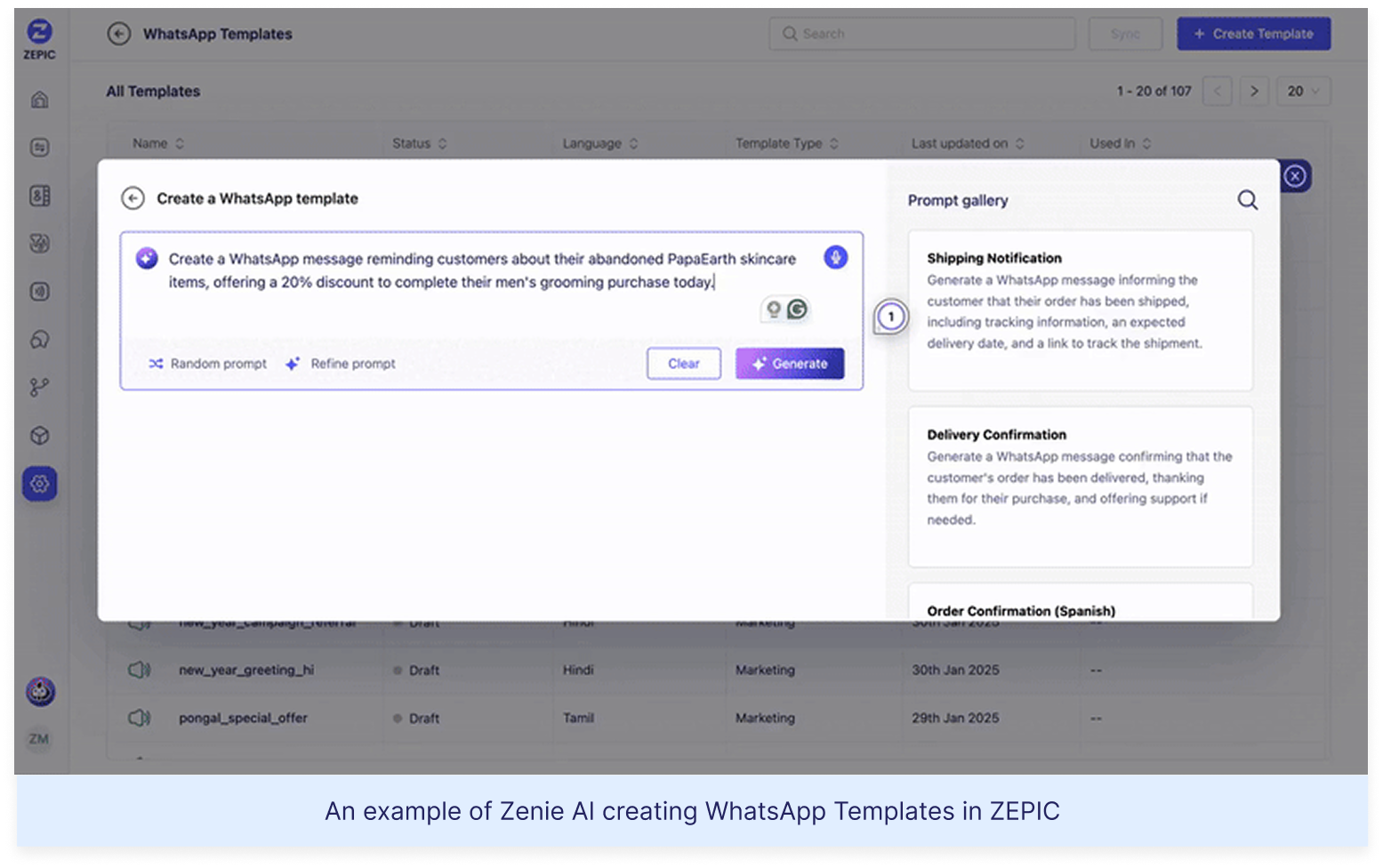

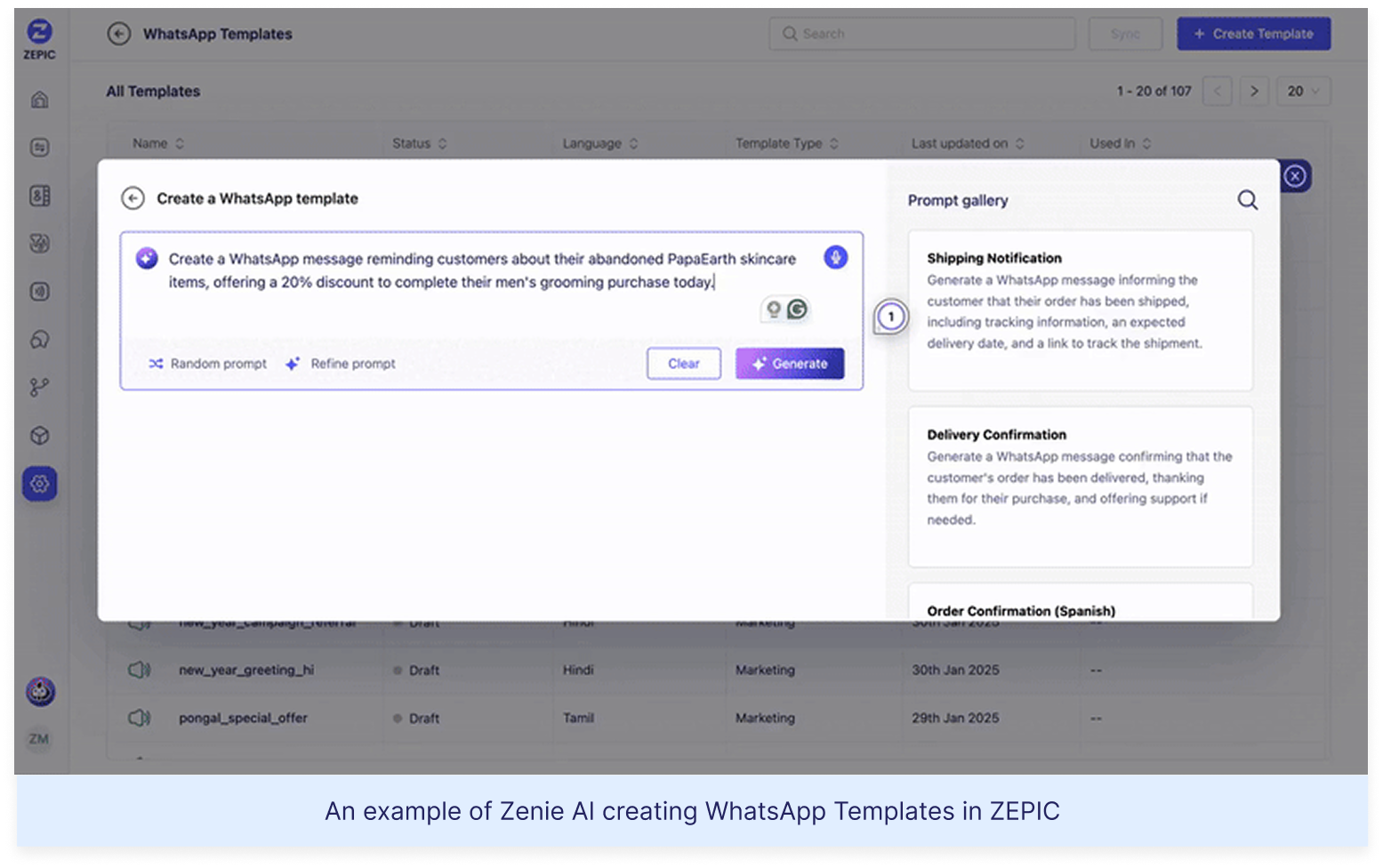

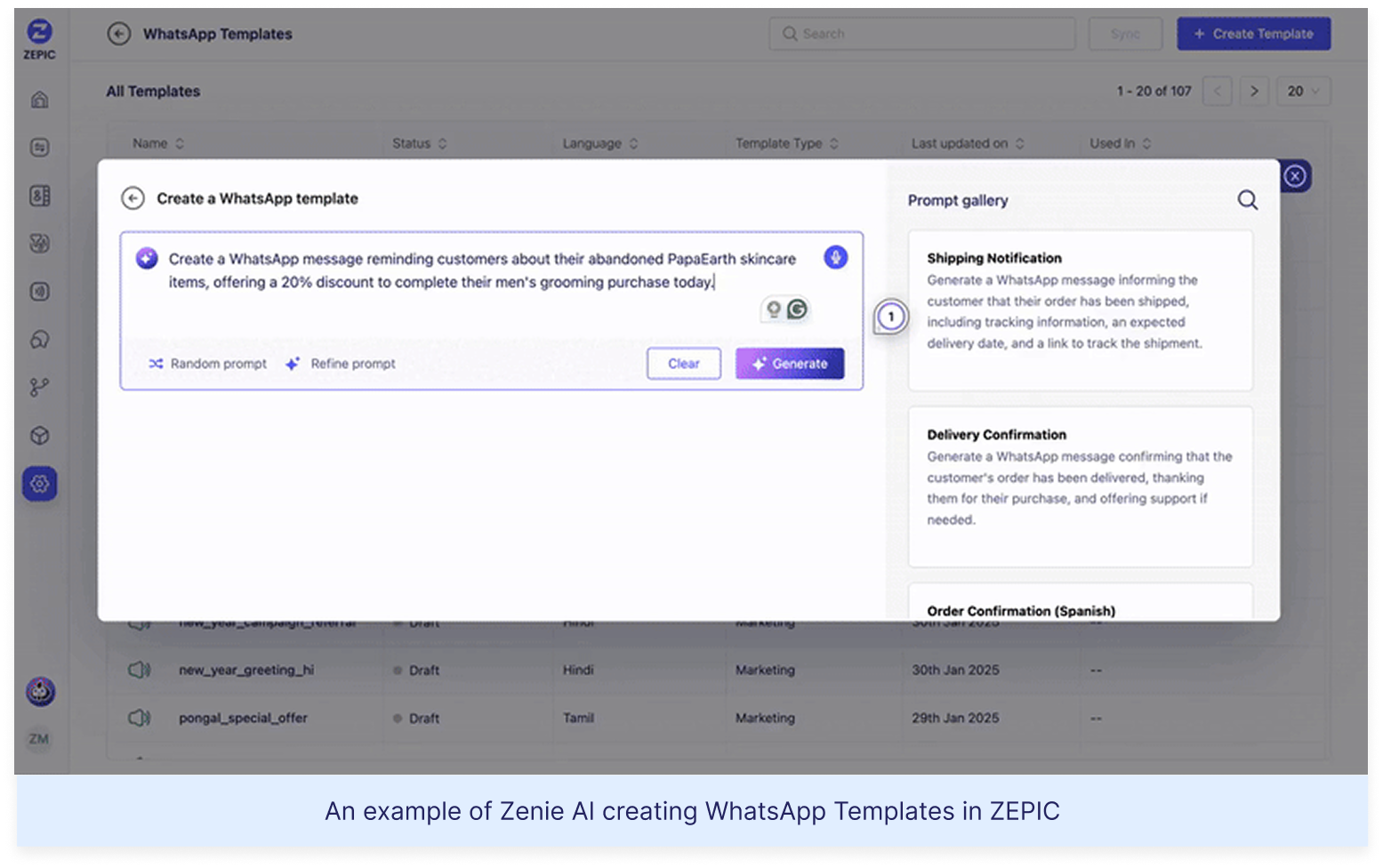

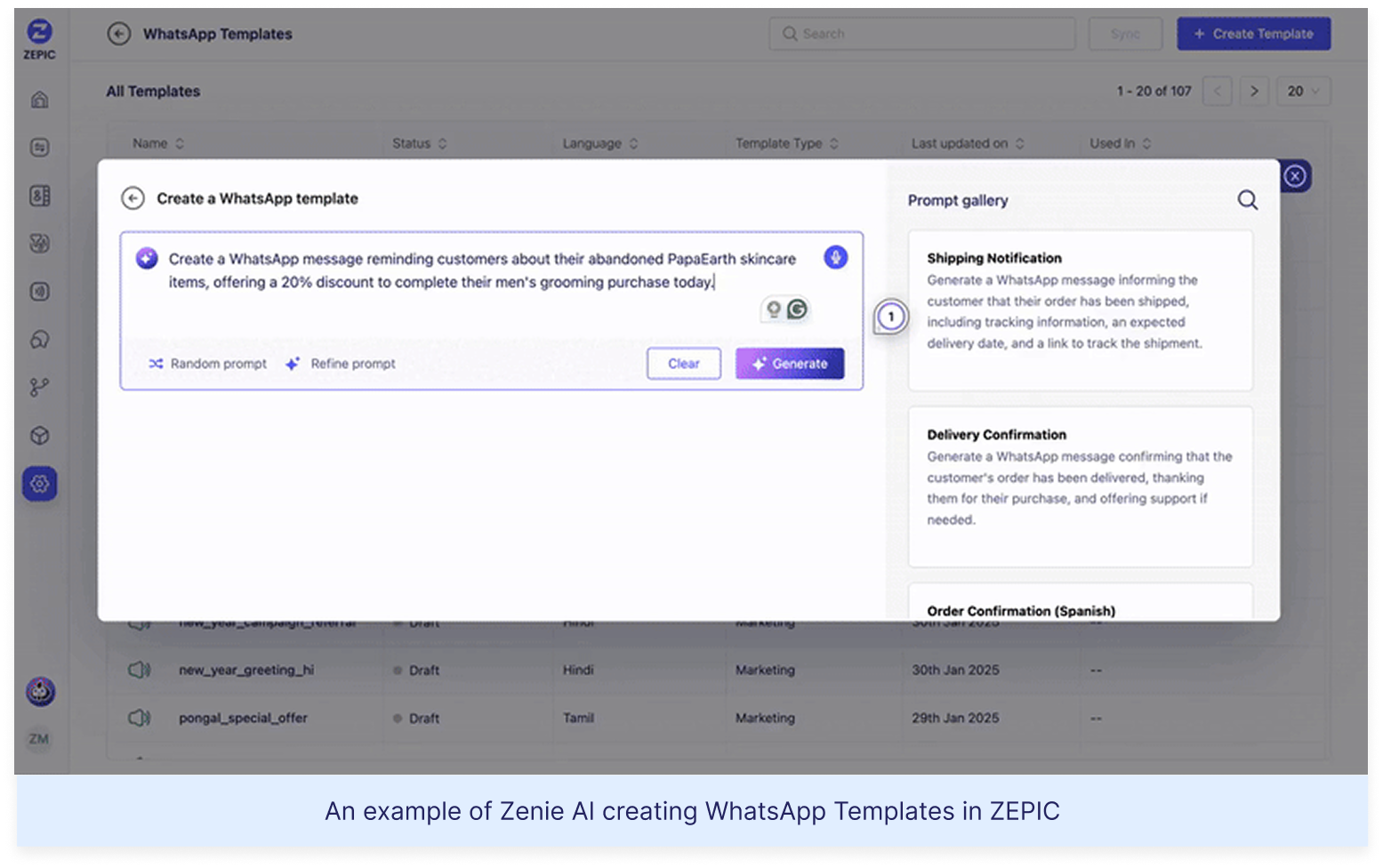

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Fix #2: Reactivate past customers today

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Offering light at the end of the tunnel is Google’s Privacy Sandbox which seeks to ‘create a thriving web ecosystem that is respectful of users and private by default’. Like the name suggests, your Chrome browser will take the role of a ‘privacy sandbox’ that holds all your data (visits, interests, actions etc) disclosing these to other websites and platforms only with your explicit permission. If not yet, we recommend testing your websites, audience relevance and advertising attribution with Chrome’s trial of the Privacy Sandbox.

Top 3 impacts of the third-party cookie phase-out

Who’s impacted

How

What next

Digital advertising and

acquisition teams

Lack of cookie data results in drastic fall in website traffic and conversion rate

Review all cookie-based audience acquisition. Sign up for Chrome’s trial of the Privacy Sandbox

Digital Customer Experience

Customers are not served relevant, personalised experiences: on the web, over social channels and communication media

Multiply efforts to collect first-party customer data. Implement a Customer Data Platform

Security, Privacy and Compliance teams

Increased scrutiny from regulators and questions from customers about data storage and usage

Review current cookie and communication consent management, ensure to align with latest privacy regulations

%201.png)