TLDR:

- AI agents often fail in production because they do not share context effectively, leading to repetitive questions, broken workflows, and poor user experiences.

- In agentic AI systems, context refers to the current working information, while memory stores long-term knowledge and state tracks workflow progress.

- Large context windows alone are not enough because LLMs struggle with information overload and the “lost in the middle” problem.

- Isolated agents create issues like blank-slate behavior, duplicated work, contradictory actions, and failed handoffs between systems.

- Effective multi-agent systems require genuine communication where agents share goals, context, constraints, and workflow understanding.

- Shared context acts like a centralized “whiteboard” that allows all agents to understand workflow progress and collaborate efficiently.

- Architectures like vector databases, knowledge graphs, orchestrator-agent models, and event-driven communication enable reliable context sharing.

- Strong multi-agent design principles include explicit context blocks, persistent state management, defined agent responsibilities, and graceful error handling.

- Enterprise AI deployments fail when companies treat agents as isolated tools instead of building shared context as foundational infrastructure.

Imagine a customer reaching out to a premium airline because their flight was canceled. They spend twenty minutes chatting with a booking agent that gathers their preferences, loyalty number, and dietary restrictions. The booking agent realizes it cannot process a refund directly and hands the session over to a billing agent.

The billing agent opens the chat and asks, "Hello! How can I help you today? What is your ticket number?"

The customer is instantly frustrated. The information they provided seconds ago hasn’t been passed on to the other agent. This is a classic example of an AI agent handoff failure in production. While the individual models are intelligent, the system as a whole is forgetful. This lack of continuity is the silent killer of modern automation.

As businesses move from simple chatbots to complex multi-agent AI systems, the challenge shifts from model intelligence to system coordination. AI agents are incredibly powerful when working in isolation. However, they become brittle and unreliable when they have to collaborate.

This article explores why shared context is the essential connective tissue for reliable AI and how to build systems where agents actually talk to each other.

What We Mean by Context in Agentic AI

To understand why agents fail, we must first define what they are supposed to remember. In the world of Large Language Models (LLMs), context is the information the model can see and use to generate a response at any given moment.

The Difference Between Memory, Context, and State

In professional AI agent architecture, we categorize information into three distinct buckets:

- Memory: This is the long-term storage of past interactions. It functions like a library where the agent can look up what happened weeks ago.

- Context: This is the working space of the agent. It includes the current conversation, the specific task at hand, and any immediate data retrieved from tools.

- State: This is the technical status of a process. For example, if an agent is booking a flight, the state tracks whether the payment is pending, completed, or failed.

Short-Term vs. Long-Term Context in LLMs

LLMs use token windows to process information.

Short-term context is often referred to as episodic memory. It covers the immediate back-and-forth of a single session. Long-term context, or semantic memory, involves pulling relevant facts from a massive database using a RAG pipeline (Retrieval-Augmented Generation).

While a model might have a huge context window, it still faces the lost in the middle phenomenon. Research shows that LLMs are great at recalling information from the very beginning or the very end of a prompt but struggle with details buried in the center. This is a primary reason why AI agents fail when prompts become too bloated with unnecessary data.

Why Context Windows Create a Hard Ceiling

Even with context windows expanding to millions of tokens, they are not a substitute for true communication. Loading every single piece of data into a prompt is expensive, slow, and increases the risk of hallucinations. When multi-agent LLM communication relies solely on dumping huge amounts of text into a shared window, the agents lose the signal in the "noise."

How Isolated Agents Fail in the Real World

In many current agentic AI workflows, agents operate like coworkers who are forbidden from speaking to each other. They only communicate through a manager who passes strictly defined files. This isolation leads to several predictable failure patterns.

The Blank Slate Problem

Every time an isolated agent is triggered, it starts from zero. Without a mechanism for AI agents to share context, the agent has no idea what its predecessor did. If an analysis agent spends ten minutes cleaning a dataset and then passes it to a Reporting Agent, the second agent may attempt to clean the data again because it does not know the work is already done. This redundancy wastes compute tokens and increases latency.

Handoff Failures: The Data Gap

A handoff occurs when Task A is finished, and Task B begins. In many autonomous AI agent problems, the handoff is just a raw data transfer. If the first agent fails to summarize its findings or explain the why behind its actions, the second agent lacks the intent.

For instance, a research agent might find three sources for a blog post. If it hands those sources to a writing agent without explaining that Source #2 is the most reliable, the writing agent might prioritize Source #3, leading to a lower-quality output.

Contradiction Loops

When multiple agents work on the same project without a shared state, they can work at cross-purposes. Consider an AI-driven marketing system:

- Agent A (Social Media) schedules a post for 10:00 AM.

- Agent B (Product Update) changes the product name at 10:05 AM.

- Agent A is unaware of the name change and posts the old name.

This lack of inter-agent communication creates a fragmented brand voice and technical errors that require human intervention to fix.

Why Agents Need to Communicate (Not Just Operate)

The solution to these failures is a shift in philosophy. We must move from building agents that "do tasks" to agents that "share understanding."

Data Passing vs. Genuine Communication

Data passing is the act of sending a JSON object from Agent A to Agent B. Communication is the act of sharing context, goals, and constraints. When agents communicate, they don't just send the final answer. They send the status of their work and any obstacles they encountered.

Shared Context as a Coordination Primitive

In multi-agent AI systems, shared context acts as a whiteboard that all agents can see. Instead of passing notes back and forth, they all refer to a central source of truth. This allows for better AI agent orchestration because every agent knows the current progress of the entire workflow, not just their individual slice.

Think of a professional kitchen. The head chef, the line cooks, and the servers do not just pass plates. They shout "Order in!" and "Behind!" to maintain a shared understanding of the environment. If a server simply dropped a plate in front of a cook without context, the kitchen would collapse. AI agent collaboration requires the same level of environmental awareness.

Architectures That Enable Shared Context

Building a system where agents talk requires specific infrastructure. Here are the most effective patterns for agentic AI workflows.

Centralized Memory Stores

Instead of keeping memory inside the LLM prompt, developers use external databases.

- Vector Databases: These store semantic information (meanings and concepts) that agents can query.

- Knowledge Graphs: These store relationships. An agent can see that "User X" is related to "Company Y" and has a "Preference for Z." This provides a structured way to manage AI workflow automation agent memory issues.

Orchestrator-Agent Patterns

Some modern frameworks have popularized the orchestrator model. In this setup, a manager agent maintains the global context. It delegates tasks to worker agents and then integrates their findings back into the central state. This ensures that the worker agents stay focused while the manager handles the big picture.

Message-Passing and Event-Driven Communication

In more advanced systems, agents use an agent-to-agent protocol. This is similar to how microservices communicate in traditional software. When Agent A finishes a task, it emits an "event." Other agents "subscribe" to that event and update their internal context accordingly. This allows for building AI agents that work together in real-time rather than in a rigid, linear sequence.

| Feature |

Isolated Agents |

Context-Aware Agents |

| Communication |

Direct handoff only |

Shared "whiteboard" or state |

| Memory |

Resets every task |

Persistent across sessions |

| Efficiency |

High token waste (redundancy) |

Low token waste (reuses data) |

| Reliability |

Brittle; prone to "blank slates" |

Robust; understands history |

| Scalability |

Hard to manage 3+ agents |

Designed for complex swarms |

Design Principles for Context-Aware Multi-Agent Systems

If you are designing an AI agent architecture for enterprise use, follow these four guiding principles to ensure reliability.

Principle 1: Context Must Be Explicit, Not Assumed

Never assume an agent knows why it is performing a task. Every prompt should include a "Context Block" that defines the current state of the project. If an agent is joining a workflow midstream, the system should provide a concise summary of all previous steps.

Principle 2: Agents Should Declare Needs and Products

A common reason why AI agents fail is that they receive the wrong type of data. In a well-designed system, Agent A declares: "I produce a formatted CSV." Agent B declares: "I require a CSV to generate a chart." This explicit mapping prevents handoff errors and ensures the agent-to-agent protocol remains functional.

Principle 3: Persistent State Over Ephemeral Sessions

Context should live outside the chat session. By using persistent state management, you allow agents to "pick up where they left off" even if the system restarts. This is vital for long-running tasks like market research or software development that might take hours or days to complete.

Principle 4: Graceful Degradation

If an agent loses context or receives a corrupted handoff, it should not guess. It should be programmed to "ask for clarification" from the orchestrator or a human. Building agents that recognize when they are missing information is a key step in multi-agent LLM communication best practices.

What This Means for Enterprise AI Deployments

Many companies find that their AI Proof of Concepts (PoCs) work beautifully, but their production deployments fail. This is often because a PoC uses a single agent for a simple task, while production requires a chain of agents for a complex business process.

Why Production Is Harder Than PoCs

In a controlled demo, context is easy to manage. In the real world, data is messy. Users change their minds, APIs return errors, and tasks are interrupted. Without a robust system for how to share context between multiple AI agents, these real-world "edge cases" cause the entire system to break.

Shared Context as an Infrastructure Investment

Enterprises must stop viewing AI as a series of isolated prompts. Instead, shared context should be viewed as a layer of infrastructure, similar to a database or a cloud server. Investing in a centralized context layer allows companies to scale from 2 agents to 200 without a linear increase in errors. This is the foundation of agentic AI system design for enterprises.

The Future of AI Is Agents That Listen

The next stage of the AI revolution is not about making models "smarter" in terms of raw facts. It is about making them more collaborative. We are moving away from the era of the solitary genius AI toward the era of the "high-performing AI team."

When agents can share context, they stop being tools and start being digital coworkers. They can anticipate needs, correct each other's mistakes, and maintain a seamless experience for the end user. Intelligence alone is no longer the bottleneck for automation. The real challenge is coordination.

By implementing structured memory, clear communication protocols, and a shared state, you can transform brittle, isolated bots into a reliable multi-agent system. The future of AI belongs to the agents that know how to listen to one another.

Key Takeaways for Building Multi-Agent Systems

- Avoid the "Blank Slate": Use external state management so agents don't start every task from zero.

- Prioritize Communication: Focus on how information moves between agents, not just the quality of the individual LLM.

- Use the Right Architecture: Implement vector DBs or knowledge graphs to serve as a "long-term memory" for your agents.

- Be Explicit: Clearly define the inputs and outputs for every agent in your workflow.

Building a context-aware system is the difference between an AI that works in a demo and an AI that works in the real world. Ensure your agents have the "connective tissue" they need to succeed.

Ready to Solve the Context Gap?

Isolated agents are the biggest hurdle to scaling AI in the enterprise. NeuraHQ helps bridge this gap with persistent state management and intelligent orchestration.

Build AI agents that actually work together. Explore NeuraHQ today to see how shared context can transform your automation strategy.

Desperate times call for desperate Google/Chat GPT searches, right? "Best Shopify apps for sales." "How to increase online sales fast." "AI tools for ecommerce growth."

Been there. Done that. Installed way too many apps.

But here's what nobody tells you while you're doom-scrolling through Shopify app reviews at 2 AM—that magical online sales-boosting app you're searching for? It doesn't exist. Because if it did, Jeff Bezos would've bought (or built!) it yesterday, and we (fellow eCommerce store owners) would all be retired in Bali by now.

Growing a Shopify store and increasing online sales isn’t easy—we get it. While everyone’s out chasing the next “revolutionary” tool/trend (looking at you, DeepSeek), the real revenue drivers are probably hiding in plain sight—right there inside your customer data.

After working with Shopify stores like yours (shoutout to Cybele, who recovered almost 25% of their abandoned carts with WhatsApp automation), we’ve cracked the code on what actually moves the needle.

Ready to stop app-hopping and start actually growing your sales by using what you already have? Here are four fixes that will get you there!

Fix #1: Convert abandoned carts instantly (Like, actually instantly)

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

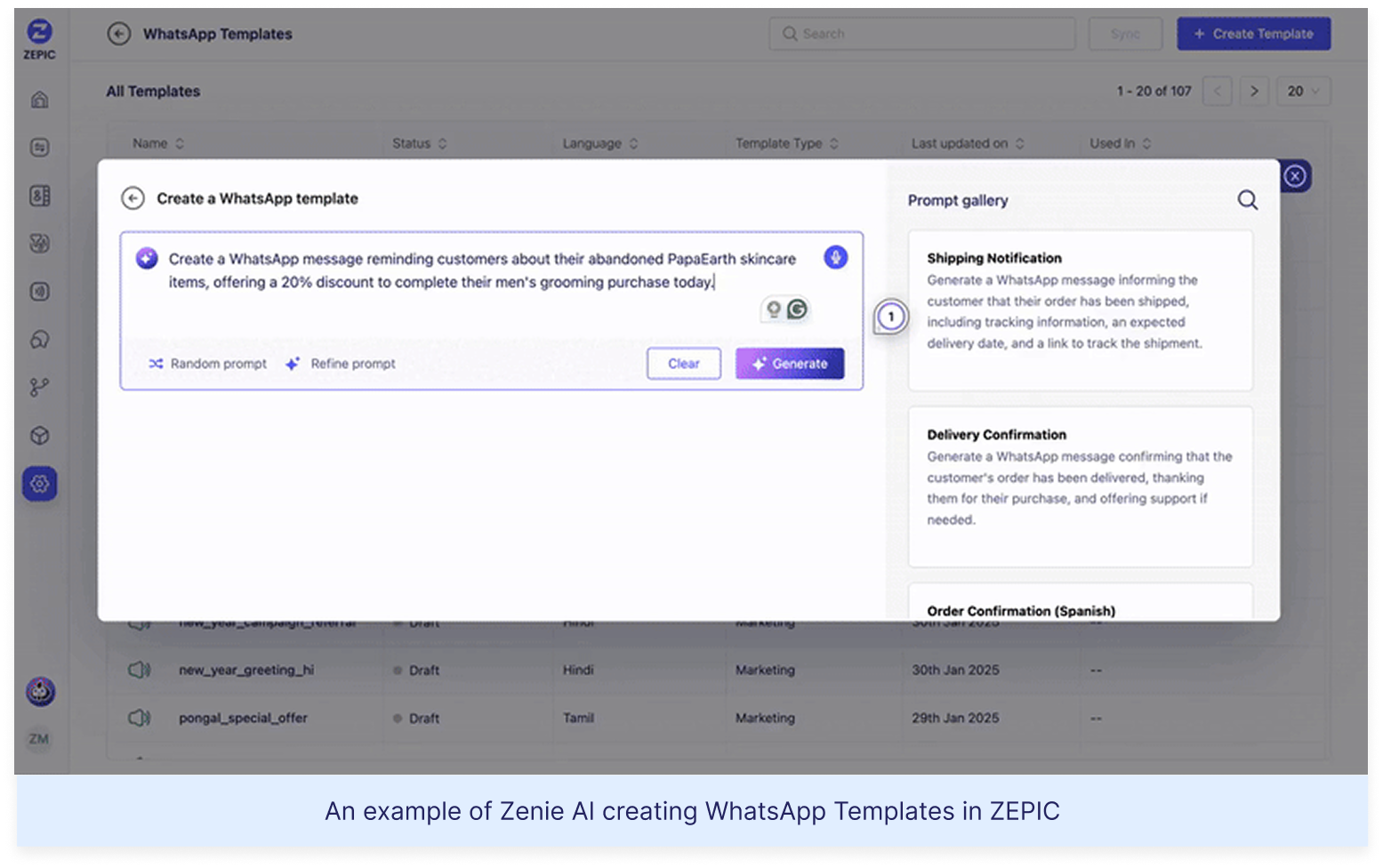

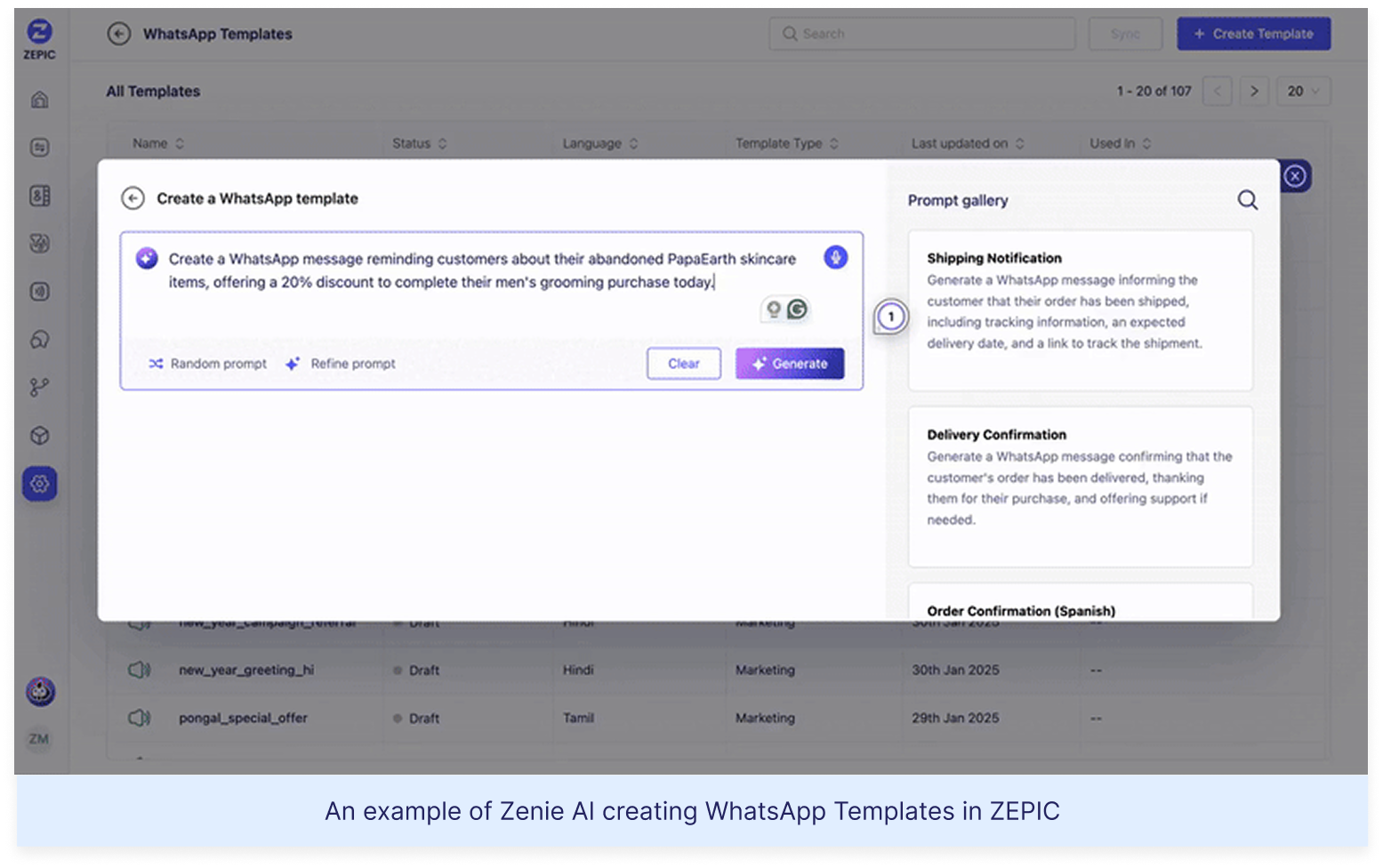

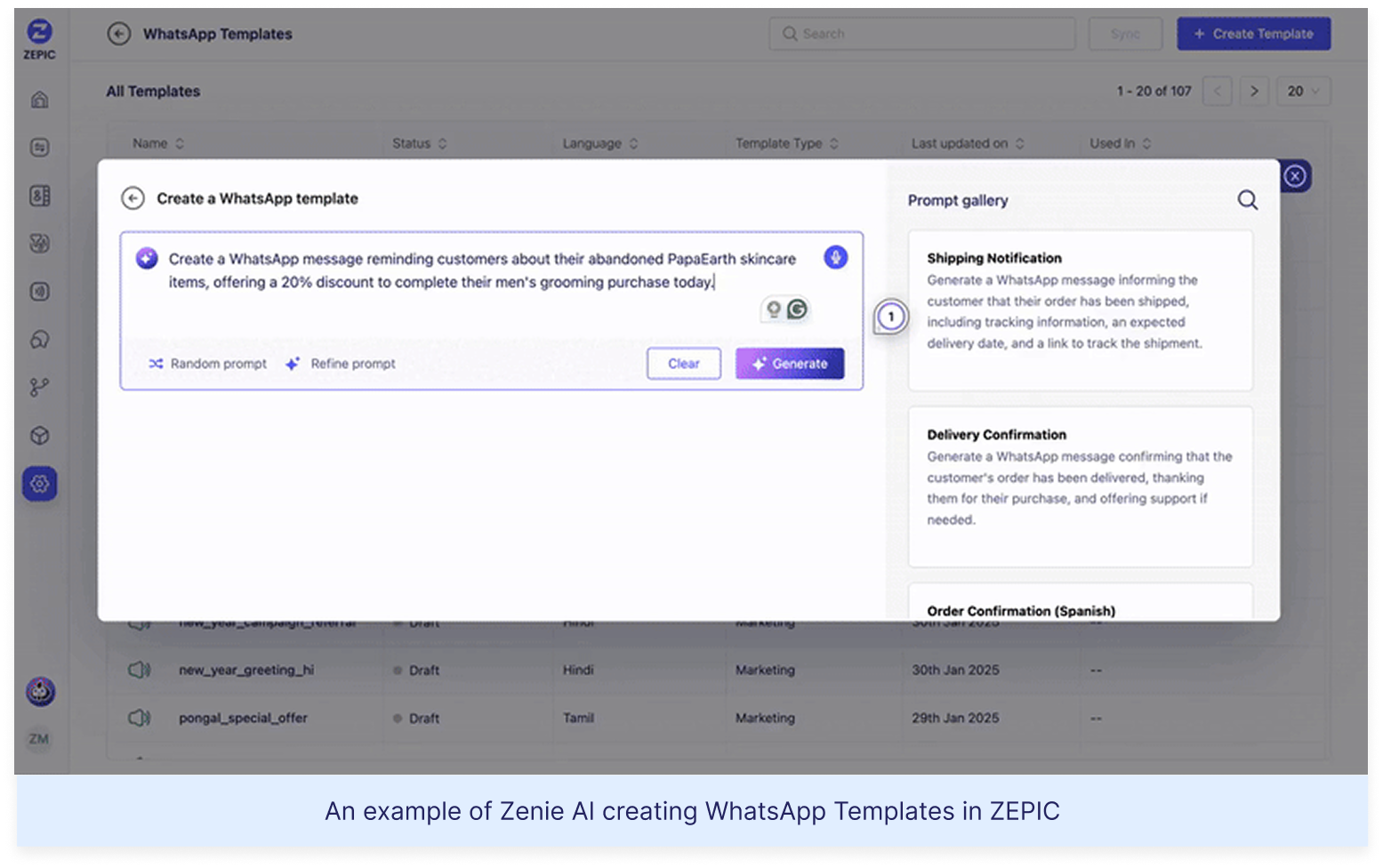

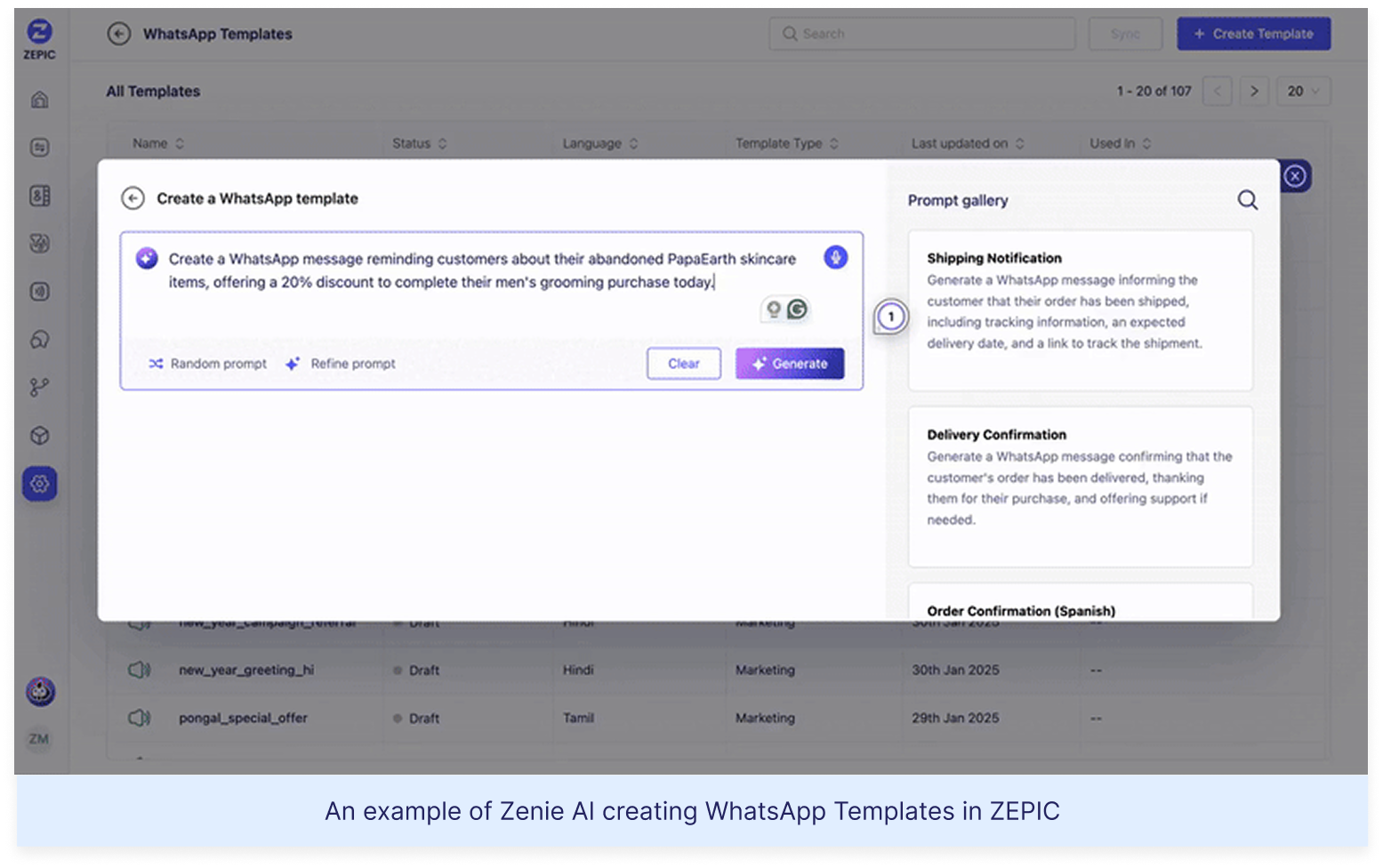

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Fix #2: Reactivate past customers today

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Offering light at the end of the tunnel is Google’s Privacy Sandbox which seeks to ‘create a thriving web ecosystem that is respectful of users and private by default’. Like the name suggests, your Chrome browser will take the role of a ‘privacy sandbox’ that holds all your data (visits, interests, actions etc) disclosing these to other websites and platforms only with your explicit permission. If not yet, we recommend testing your websites, audience relevance and advertising attribution with Chrome’s trial of the Privacy Sandbox.

Top 3 impacts of the third-party cookie phase-out

Who’s impacted

How

What next

Digital advertising and

acquisition teams

Lack of cookie data results in drastic fall in website traffic and conversion rate

Review all cookie-based audience acquisition. Sign up for Chrome’s trial of the Privacy Sandbox

Digital Customer Experience

Customers are not served relevant, personalised experiences: on the web, over social channels and communication media

Multiply efforts to collect first-party customer data. Implement a Customer Data Platform

Security, Privacy and Compliance teams

Increased scrutiny from regulators and questions from customers about data storage and usage

Review current cookie and communication consent management, ensure to align with latest privacy regulations

%201.png)