TLDR:

- Enterprises are rapidly adopting autonomous AI agents in 2026, but most organizations still lack full visibility into how these systems operate.

- AI agents differ from traditional automation because they use reasoning, tool access, and autonomous decision-making rather than fixed rule-based logic.

- AI agents create new security risks through tool usage, memory systems, prompt injection attacks, and multi-agent orchestration.

- Strong AI agent security requires identity management, least-privilege access, secrets management, network controls, output filtering, and red-teaming practices.

- Governance frameworks are now essential due to regulations like the EU AI Act, requiring accountability, audit trails, explainability, and human oversight.

- Scaling AI agents across an enterprise demands orchestration frameworks, observability tools, token budgeting, circuit breakers, and workforce training.

- Industries like finance, healthcare, legal services, and manufacturing face stricter compliance and operational risks when deploying AI agents.

- Multi-agent orchestration is becoming the standard because specialized agents are easier to monitor, govern, and scale than one large general-purpose agent.

- Enterprises are encouraged to assess their maturity level across security, governance, and scale before moving AI agents into production.

- Organizations that balance AI innovation with enterprise-grade security, governance, and observability will gain the biggest operational and competitive advantages.

The year 2026 has arrived, and with it, a fundamental shift in how businesses operate. We have moved past the era of simple chatbots and basic Large Language Model (LLM) prompts. Today, the focus is on autonomous AI agents that can plan, use tools, and execute multi-step workflows with minimal human intervention.

Recent data highlights the speed of this transition. By the end of this year, an estimated 40% of enterprise applications will integrate task-specific AI agents, a massive jump from less than 5% only twelve months ago. However, this rapid adoption has created a significant oversight gap. Current reports indicate that only 24% of organizations have full visibility into what their AI agents are actually doing.

For CIOs, CTOs, and risk officers, 2026 is the year to sink or swim. Deploying an agent is easy, but deploying one that is secure, compliant, and scalable is a complex engineering and governance challenge. This guide provides a comprehensive enterprise AI agent readiness checklist to help your organization move from experimental pilots to robust, production-grade agentic systems.

What makes AI agents different from traditional software

To understand enterprise AI agent readiness, we must first recognize that agents are not typical software. Traditional automation follows "if-this-then-that" logic. An AI agent, by contrast, uses reasoning to determine the best path to a goal.

Autonomous decision-making vs. rule-based automation

Traditional software is predictable because its code is static. If the input is A, the output is always B. AI agents use probabilistic reasoning. They might choose to call an API, search a database, or ask a human for clarification, depending on the context. This autonomy provides incredible flexibility, but it also means that the execution path is not hard-coded.

New attack surfaces: tool use and memory

Agents are built to take action. They have tool-use capabilities (often called "function calling") that allow them to interact with your CRM, email servers, or cloud infrastructure. This creates a new attack surface. If an agent is compromised via a prompt injection attack, the attacker not only gets a text response, but they might also gain the ability to delete database records or send unauthorized emails.

The breakdown of legacy IT security

Most legacy security models rely on a perimeter. You protect the gate and assume everything inside is safe. Agentic AI breaks this because agents often act as internal users with high levels of access. When an agent can autonomously navigate through different systems, a single point of failure can lead to lateral movement across the entire enterprise.

The multi-agent orchestration problem

Scaling in 2026 involves more than just one agent. It involves multi-agent orchestration enterprise strategies where different agents collaborate. Managing the communication, permissions, and hand-offs between these agents add a layer of complexity that traditional middleware was never designed to handle.

The security readiness checklist: 10 checkpoints

Security is the most significant hurdle for AI agent security enterprise initiatives. Because agents are proactive, the risk of a breach is higher and more expensive. In 2026, the average cost of a data breach has risen to $4.88 million globally, with US-based organizations facing costs as high as $9.44 million per incident.

Use these ten checkpoints to evaluate your AI agent security enterprise posture:

- 1. Identity and authentication: Does every agent have a unique machine identity? Agents should never share credentials with human users. Use OAuth scopes and service accounts to track exactly which agent performed which action.

- 2. Least-privilege access: Are your agents over-provisioned? An agent designed to summarize meetings should not have edit access to your financial database. Apply zero-trust principles to every tool an agent can call.

- 3. Prompt injection and adversarial defense: Have you implemented filters for both direct and indirect prompt injection? Indirect injection is a major threat in 2026, where an agent reads a malicious email or website and is tricked into performing unauthorized tasks.

- 4. Secrets management: Are API keys and credentials hard-coded into prompts or agent configurations? Use a centralized vault to inject credentials at runtime.

- 5. Network egress controls: Can your agent talk to the open internet without restriction? Use sandboxing and allow-lists to ensure agents only communicate with approved external domains.

- 6. Output filtering and moderation: Do you have a secondary guardrail model checking the agent’s output? This prevents the agent from leaking sensitive data or generating toxic content.

- 7. Supply chain security: Are you tracking the provenance of the base models and third-party tools your agents use? A vulnerability in an open-source agent framework can compromise your entire stack.

- 8. Incident response playbooks: Does your security team know how to kill a runaway agent? You need specific procedures for AI failures that include isolating the agent and auditing its recent chain of thought.

- 9. Data residency and encryption: Is the data your agent processes staying within your required geography? Ensure all data is encrypted at rest and in transit, especially when using multi-cloud agent architectures.

- 10. Red-teaming for agents: Are you performing agentic penetration testing? Traditional vulnerability scans are not enough. You must actively try to subvert the agent’s logic to find weaknesses in its reasoning.

The governance readiness checklist: 8 checkpoints

Governance ensures that AI agents remain aligned with business goals and legal requirements. With the EU AI Act entering its full enforcement phase in August 2026, compliance is no longer optional for any company doing business in Europe.

A robust enterprise AI governance 2026 framework includes the following:

- 1. Defined AI ownership: Who is responsible if an agent makes a $50,000 error? You must assign clear accountability to a human owner for every autonomous system.

- 2. Internal governance charter: Does your company have a written policy on what agents can and cannot do? This charter should be the North Star for all development teams.

- 3. Human-in-the-loop (HITL) thresholds: At what point must an agent stop and ask for permission? High-stakes actions (like moving money or hiring/firing) should always have a mandatory human approval gate.

- 4. Audit trails and decision logging: Can you reconstruct the agent's logic? Enterprise AI governance 2026 requires explainability. You must log the prompt, the retrieved context, the tool called, and the final decision.

- 5. Regulatory alignment: Are you following the NIST AI Risk Management Framework? Ensure your deployments meet the specific requirements of the EU AI Act, including registration of high-risk systems.

- 6. Bias and fairness evaluations: Are your agents making biased decisions? Regularly test your agents with diverse datasets to ensure they are not reinforcing historical prejudices.

- 7. Vendor due diligence: If you use a third-party agent, have you reviewed their security and bias reports? You are still responsible for the actions of vendors acting on your data.

- 8. Board-level reporting: Is the board of directors aware of AI risks? Establish a quarterly cadence for reporting AI near-misses and compliance status to the board.

The scale readiness checklist: 7 checkpoints

Many companies fail when moving from one agent to one hundred. AI agent deployment at scale requires a different architectural approach than simple prototypes.

- 1. Compute and model serving infrastructure: Do you have the GPU capacity or API throughput to handle thousands of agent calls? Consider a model router to send simple tasks to small models and complex tasks to large ones to save on costs.

- 2. Orchestration frameworks: Are you using a standardized framework like LangGraph or a custom-built solution? Standardization is key to maintaining AI scalability in enterprise architecture.

- 3. Cost governance and token budgeting: Agents can be token hungry. Implement rate limits and kill switches to prevent an agent from getting stuck in an infinite loop that costs thousands of dollars in API fees.

- 4. The observability stack: Are you using specialized enterprise AI agent observability tools? You need more than just uptime monitoring; you need to track evals (how well the agent is performing its task) and drift (if the agent’s performance is degrading over time).

- 5. Agent catalogs: Do your teams know what agents already exist? A central catalog prevents agent sprawl, where five different teams build five different email summarizer agents.

- 6. Circuit-breaker patterns: If a downstream API is slow or down, how does the agent react? Implement circuit breakers to stop agents from repeatedly hammering a broken system.

- 7. Workforce readiness and change management: Are your employees ready to work alongside agents? Scaling requires training users on how to collaborate with and supervise their new digital colleagues.

Industry-specific considerations

The best practices for agentic AI in regulated industries vary significantly based on the legal landscape of 2026.

Financial Services

Banks and fintechs face the highest pressure for explainability. If an AI agent denies a loan, the institution must be able to provide the specific reasoning used. Compliance with SOC 2 and PCI-DSS is the baseline, but the 2026 focus is on "Model Risk Management" (MRM) specifically for autonomous agents.

Healthcare

In healthcare, the average cost of a breach has reached a staggering $9.8 million. Agents here must adhere to strict HIPAA and FDA "Software as a Medical Device" (SaMD) regulations. The primary risk is clinical decision liability. If an agent provides medical advice, the legal responsibility stays with the provider.

Legal and Professional Services

For law firms and consultants, attorney-client privilege is the top concern. Using public LLM APIs can waive privilege if data is used for training. In 2026, legal enterprises are shifting toward private, VPC-hosted agents to ensure data remains confidential.

Manufacturing and Supply Chain

Manufacturing has seen an 18% year-over-year increase in cyberattacks. Agents here operate at the "OT/IT boundary." An agent controlling a physical robot or a chemical valve has safety-critical implications. These systems require "physical fail-safes" that exist outside of the AI software itself.

Maturity model: Where does your enterprise sit?

Evaluating your enterprise AI readiness checklist helps you determine which level of maturity your organization has reached.

- Level 1: Experimenting (Siloed Pilots)

- Individual teams are using Shadow AI.

- There is no central policy or security oversight.

- Risk: High vulnerability to data leaks and unmanaged costs.

- Level 2: Scaling (Platform Teams Forming)

- The organization has a centralized AI platform team.

- Basic security controls (SSO, logging) are in place.

- Risk: Inconsistent governance across different departments.

- Level 3: Governing (Enterprise-wide Standards)

- A formal AI agent governance framework is active.

- Compliance with the EU AI Act and NIST is documented.

- Risk: Scaling bottlenecks due to manual approval processes.

- Level 4: Optimizing (Competitive Differentiation)

- Agents are a core part of the business strategy.

- Continuous evaluation and automated red-teaming are standard.

- Outcome: Significant gains in operational efficiency and market share.

Your 25-point readiness score card

Building a readiness culture is a continuous process. As you move through 2026, the goal is to balance the speed of innovation with the stability of enterprise-grade operations.

Summary of the Checklist:

- Security (10 points): Focus on identity, least-privilege, and prompt injection defense.

- Governance (8 points): Focus on accountability, audit trails, and regulatory alignment.

- Scale (7 points): Focus on observability, cost management, and orchestration.

If you have checked fewer than 15 of these points, your organization is likely at a "Level 1" or "Level 2" maturity. To move forward, prioritize the Security block first. An insecure agent is a liability that can erase the productivity gains of the technology.

Are you ready to secure your agentic future? Visit NeuraHQ to learn how our platform helps enterprises manage AI agent observability, security, and governance at scale.

Frequently Asked Questions

What is the difference between AI automation and AI agents?

Traditional AI automation is rule-based and linear, executing predefined workflows using fixed logic. AI agents, on the other hand, are autonomous and reasoning-driven. They interpret goals, select tools, and determine the sequence of actions dynamically. While automation is predictable, AI agents are adaptive and capable of handling complex, changing scenarios.

How is the EU AI Act affecting enterprise AI deployments in 2026?

The EU AI Act has introduced strict compliance requirements for high-risk AI systems. Enterprises must now implement measures such as human oversight, detailed event logging, and bias testing. Compliance is mandatory for certain use cases, and failure to meet these standards can result in significant financial penalties, making regulatory alignment a core part of AI system design.

How do you implement least-privilege access for AI agents?

Least-privilege access is achieved by assigning each agent a unique identity, granting only task-specific permissions, and using time-bound credentials that expire after task completion. This minimizes risk by ensuring agents only access the data and systems required for their specific function.

What is multi-agent orchestration and why does it matter?

Multi-agent orchestration involves multiple specialized AI agents working together to complete complex tasks. Each agent handles a specific responsibility, improving accuracy, scalability, and reliability. This approach reduces the risks associated with single, generalized systems and allows better monitoring and governance.

What tools are used for AI agent observability and monitoring?

Modern AI observability tools track decision paths, evaluate output quality, monitor resource usage, and detect performance drift. These systems help organizations ensure that AI agents remain accurate, efficient, and aligned with business and compliance requirements.

Is NIST AI RMF required for enterprise compliance in 2026?

The NIST AI Risk Management Framework is not legally mandatory, but it is widely adopted as a best practice. Many organizations follow it to align with regulatory expectations, support compliance efforts, and meet requirements from partners, insurers, and government contracts.

Desperate times call for desperate Google/Chat GPT searches, right? "Best Shopify apps for sales." "How to increase online sales fast." "AI tools for ecommerce growth."

Been there. Done that. Installed way too many apps.

But here's what nobody tells you while you're doom-scrolling through Shopify app reviews at 2 AM—that magical online sales-boosting app you're searching for? It doesn't exist. Because if it did, Jeff Bezos would've bought (or built!) it yesterday, and we (fellow eCommerce store owners) would all be retired in Bali by now.

Growing a Shopify store and increasing online sales isn’t easy—we get it. While everyone’s out chasing the next “revolutionary” tool/trend (looking at you, DeepSeek), the real revenue drivers are probably hiding in plain sight—right there inside your customer data.

After working with Shopify stores like yours (shoutout to Cybele, who recovered almost 25% of their abandoned carts with WhatsApp automation), we’ve cracked the code on what actually moves the needle.

Ready to stop app-hopping and start actually growing your sales by using what you already have? Here are four fixes that will get you there!

Fix #1: Convert abandoned carts instantly (Like, actually instantly)

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

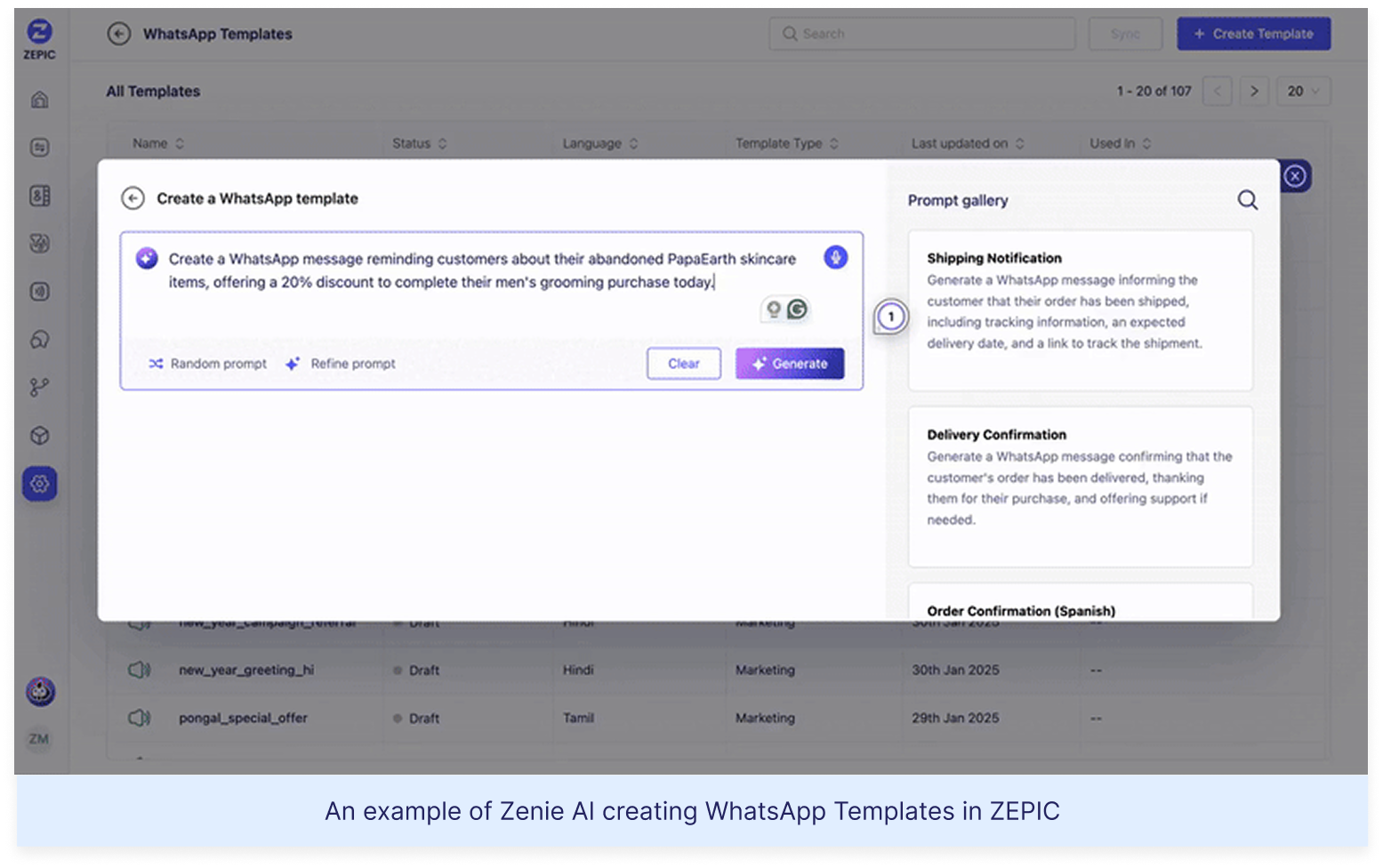

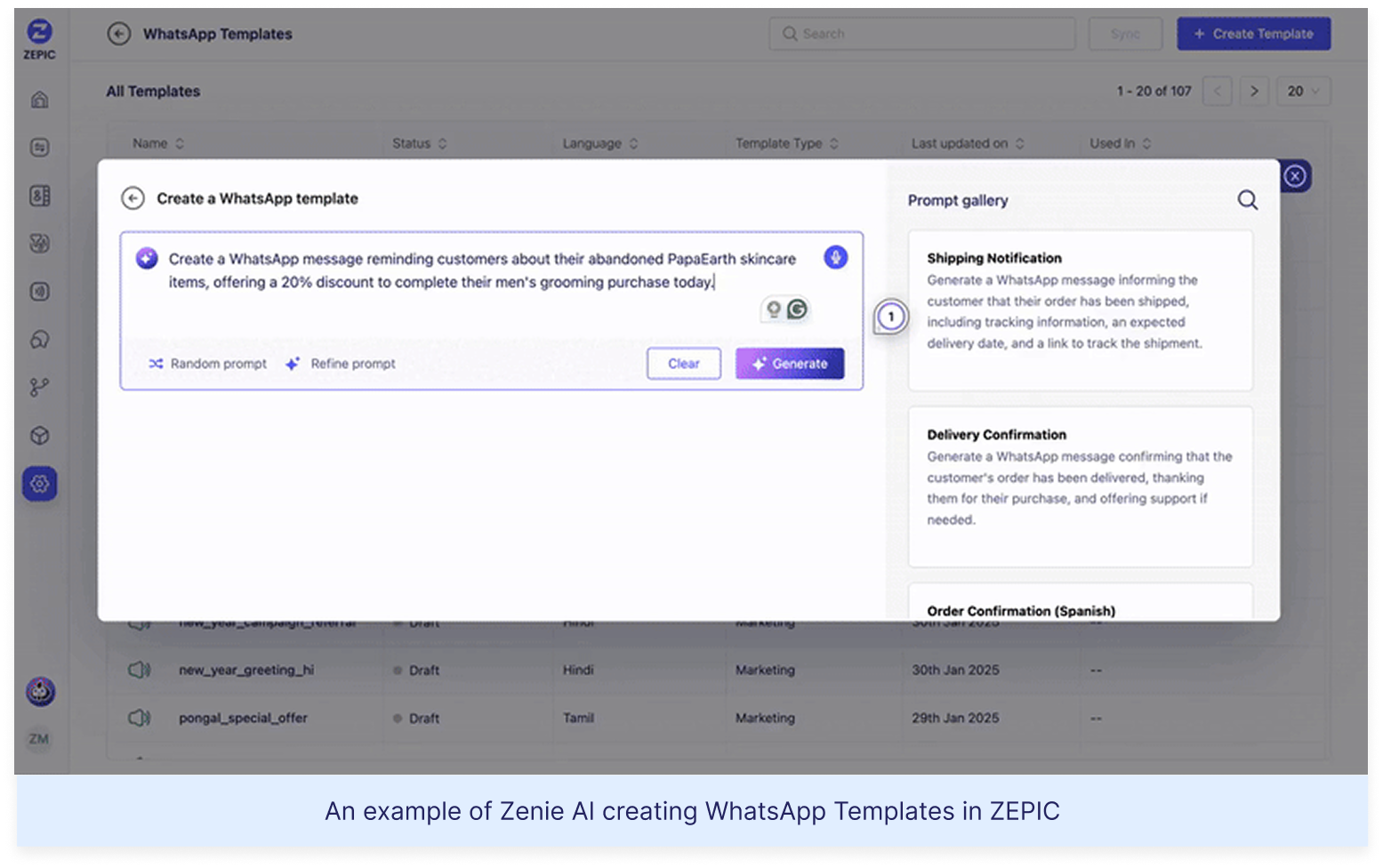

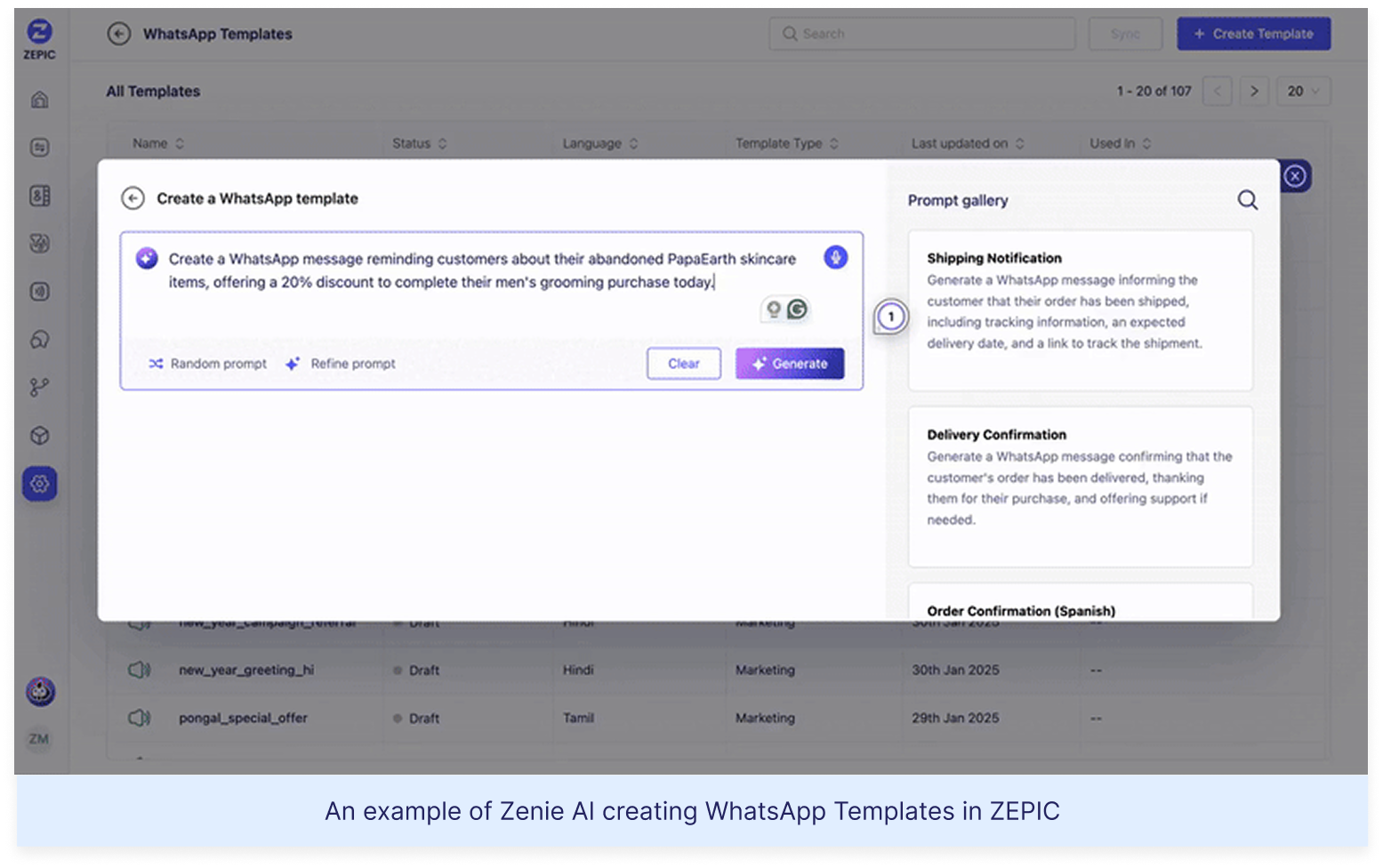

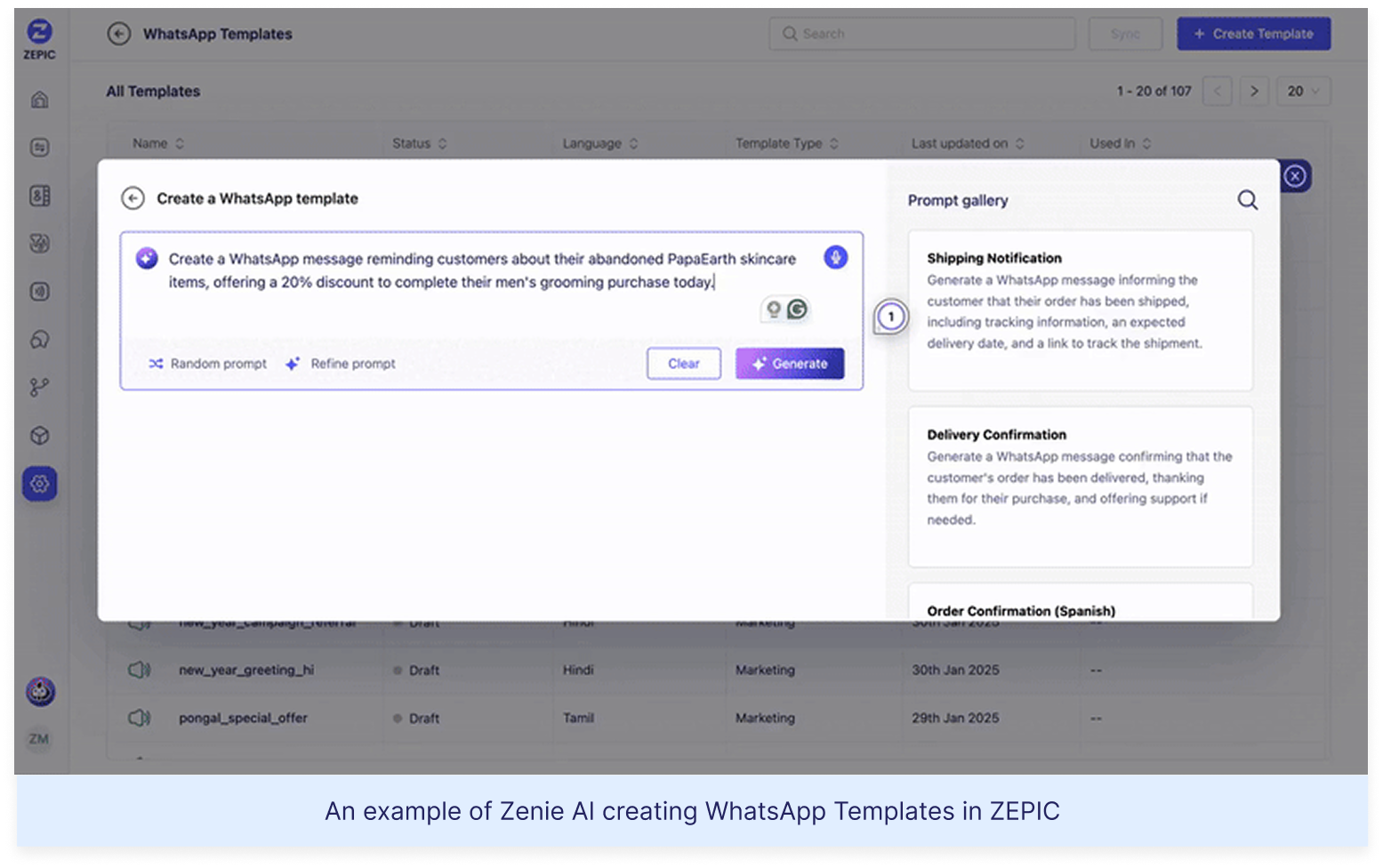

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Fix #2: Reactivate past customers today

The Painful Truth: You're probably losing about 70% of your potential sales to cart abandonment. That's not just a statistic—it's real money walking out of your digital door. And looking for yet another Shopify app for abandoned cart recovery isn't going to fix it if you're not getting the fundamentals right.

The Quick Fix: Everyone knows you need multi-channel recovery that hits the sweet spot between "Hey, did you forget something?" and "PLEASE COME BACK!" But here's the reality—most recovery apps are a one-trick pony. They either do email OR WhatsApp, not both. And don't even get us started on personalizing offers based on cart value—that usually means toggling between three different dashboards while praying your apps talk to each other.

Enter ZEPIC: This is where we come in. With ZEPIC's automated Flows, you can:

Launch WhatsApp recovery messages (with 95% open rates!)

Set up perfectly timed email sequences (or vice versa)

Create personalized recovery offers not just on cart value but based on your customer’s behavior/preferences

Track and optimize everything from one dashboard

Offering light at the end of the tunnel is Google’s Privacy Sandbox which seeks to ‘create a thriving web ecosystem that is respectful of users and private by default’. Like the name suggests, your Chrome browser will take the role of a ‘privacy sandbox’ that holds all your data (visits, interests, actions etc) disclosing these to other websites and platforms only with your explicit permission. If not yet, we recommend testing your websites, audience relevance and advertising attribution with Chrome’s trial of the Privacy Sandbox.

Top 3 impacts of the third-party cookie phase-out

Who’s impacted

How

What next

Digital advertising and

acquisition teams

Lack of cookie data results in drastic fall in website traffic and conversion rate

Review all cookie-based audience acquisition. Sign up for Chrome’s trial of the Privacy Sandbox

Digital Customer Experience

Customers are not served relevant, personalised experiences: on the web, over social channels and communication media

Multiply efforts to collect first-party customer data. Implement a Customer Data Platform

Security, Privacy and Compliance teams

Increased scrutiny from regulators and questions from customers about data storage and usage

Review current cookie and communication consent management, ensure to align with latest privacy regulations

%201.png)